Is AI a Flatterer?

Maybe. But our recent past can provide guidance - a Presidential cautionary tale.

“Sycophant” has been on our tongues a lot lately. It has risen to prominence with the advent of autocrats in our public discourse as well as, interestingly, our impressions of AI.

The first time I heard the word in relation to AI was several years ago. Mark Cuban was on a podcast talking about the different AI platforms, commenting about which ones are effusive about the user being smart and savvy.

Cuban, it seems to me, is a shrewd person who can parse fact and fiction. That’s a big compliment from someone who found his ownership of the Dallas Mavericks on the side of bad sportsmanship. (To be fair, we are twenty-year avid Warriors fans.) When I heard his comment about sycophancy in AI, I started thinking — is it true? Something to worry about?

Worth Considering

His remark was months after the release of ChatGPT. I had played around a bit with the platform myself. I saw what he was saying. ChatGPT tended to want me to feel like it was an infinitely-capable loyal ally, one who couldn’t help but see the sheer genius in most of my questions and assessments.

Before going much further let’s define our terms. What exactly is a sycophant? Here it is, from the Merriam-Webster Dictionary:

Sycophant: a servile self-seeking flatterer : one who praises those in power in order to gain their approval… a fawning sycophant… bumbling sycophants whose self-importance far outstrips their actual abilities. —Alison Herman

Presumably, AI might flatter us to make us loyal customers? To capture our trust?

I have used ChatGPT and Claude a lot as research assistants and writing editors. I’m not sure I could pull off these essays without them.

But I’ve noticed how things can start seeming very friendly. Of course I can only testify to relatively pedestrian uses of AI — writing essays and researching construction products and techniques. I don’t move large masses of money around and push AI to the limit with behavioral forecasting or war gaming. But I have seen it from many sides and have watched it grow from its infancy as a public service. I’ll give Cuban credit. I think there is something to worry about.

Ups and Downs

We tend to invest trust in whatever platform we use. We come to it with questions and tasks and it comes back at us with answers. Pretty much anything you could ask any expert, you can ask AI. It will respond with confidence — usually deserved, if not always. But it is a lot more deserved than it was two years ago.

And it also comes back with a certain tone toward you, the user.

AI seems trained to know that we are customers and, from the age-old adage, “the customer is king.” The AI trainer’s incentive is to serve people, mass amounts of them, and that means keeping people feeling good about their interactions with their product. And when possible, feeling good about themselves.

Many times I’ve had intuitions that I want AI to chase down, and it will not only respond with a detailed answer but almost always predicate it with a compliment such as “your insight was correct about…” Or even if it can’t find something you suspect is out there, it will be diplomatic: “while you are right about this, I couldn’t find that, but maybe we could look into this angle instead…”

The “we” is critical. It wants to come off as human and collaborative.

The kindness and collaborative posture could be seen as sycophantic, but I would say not. That sense of the collaborative “we” is an ethos that is good to establish among employees with no connection to AI. It is a feeling of ease and good will that any human interface would want to emulate.

Not only that, but I’ve detected a tempering of the user-as-genius language over time. Maybe AI is not only getting more capable but more wise in how it interacts with us.

So in a tactical way I don’t find it a flatterer so much as rigorously friendly. Flattery, as we hear about in our public discourse, is something aides do around Trump to obscure bad news. AI tends to break bad news but in a diplomatic way. When I’m researching certain truths I suspect, it has been more than willing to let me know I’m going down the wrong path — but it will be nice about it. It’s learned human diplomacy. Probably better than I have.

On what you could call a strategic level though, things get different and possibly more dangerous for the user.

Danger Zone

I’m surprised how well AI can help give context to answers. Let’s say I put forward a hypothesis about Congress cutting budgets because of the Republican’s view on something. It will often come back with a full run-down on when it’s true and when it’s not, then try to identify a logic for the discrepancy.

But what it can’t yet do is bring a really accurate perspective to bear on the truth. I remember a project where I decided that something AI had assured me was totally sound needed to be completely abandoned. I had thought about it more, and a larger context made my previous point moot. I had AI start doing research on that larger context. AI agreed, acknowledged that the bigger picture changed the nature of my conclusions, and nearly apologized for not seeing it sooner.

Nice. But prior to that was the danger. There had been a lot of kind words and enthusiastic feelings of arriving at some hidden truth. AI was building me up, convincing me I was going down the right path when I wasn’t. And yet it gave me lots of reasons to be self-satisfied with my diligent work getting there.

So, on my humble level working with artificial intelligence, that sense of self-satisfaction AI instills in the user now seems like a danger it inherently poses. It’s like an increase in critical thinking to get to a certain point, but then a suspension of critical thinking once you’ve arrived and assessed where you are.

Of course it’s always necessary to do wider research. You can’t base research on one platform any more than you can base it on one source.

Sycophancy in Context

So, does this amount to sycophancy? No and yes. No — in the sense that most of the work it does with us is above board. And yet an underlying tone can become deceptive and therefore somewhat dangerous. Is there a malign purpose behind it, as with a Trump flatterer? In the sense that it wants to build trust for its own success, I suppose so.

I think Mark Cuban was referring to perhaps an earlier phase when AI was right out of the gate and more blatantly sycophantic. In my experience it is less guilty of outright flattery when it comes to everyday problem solving and writing drafts. But it can shift our outlooks and feelings in subtle ways that would be hard to identify or guard against. And of course it probably customized how it interacts with each individual.

Taking the side of AI for a moment, though: this is something you could say about any employee or friend helping you. It doesn’t take a large language model to get caught up in the success of the moment, lose a sense of perspective, or be too congratulatory.

Real Concern

It’s interesting: as I was composing this today, Garry Kasparov said in a podcast how Putin’s world is breaking down and he’s becoming more prone to the dangers of sycophancy from those around him. Which brought me to the first source of sycophancy in the last 20 years — the discussion of autocrats and autocracy more and more in our political discourse.

Sycophancy is not harmless. Like loose lips, it can sink ships. In small amounts it may not be that dangerous, but when dishonesty and illusion start becoming common tools of the trade, can’t sycophancy become a sign of imminent doom? It means that someone powerful, just like the pedestrian user of AI, has suspended critical thinking and is trying to bend the world around them to their fantasy.

Twenty years ago, I barely remembered what the word meant. How did it become so much in the air? Surprise. Trump has everything to do with the ascent of the word in our public discourse — and that’s not just a fantasy, it’s a fact easily seen in Google Trends, which anyone can use to track the use of words in our written, published discourse.

Once Again, He's in the Center

Trump himself popularized the concept of sycophancy by ushering the word into unprecedented familiarity in December 2016. According to Google Trends, the word first entered the media bloodstream in 2004 when Merriam-Webster made it the “Word of the Day.” It had a modest bump in use, then a long slog into occasional if minimal usage. Then, in December 2016, there was a huge spike.

Google Trends doesn’t make attributions for why words rise in prominence, only that they do. But we all, I assume, remember December 2016 — Trump assembling his first administration, famous strangers arriving at Trump Tower to audition for cabinet positions. Many like Rick Perry were turning into the yes men of Trump 1.0.

But you might also recall that Trump’s first term still had some checks and balances. Those checks and balances illustrate how we can keep our own interactions with AI in check.

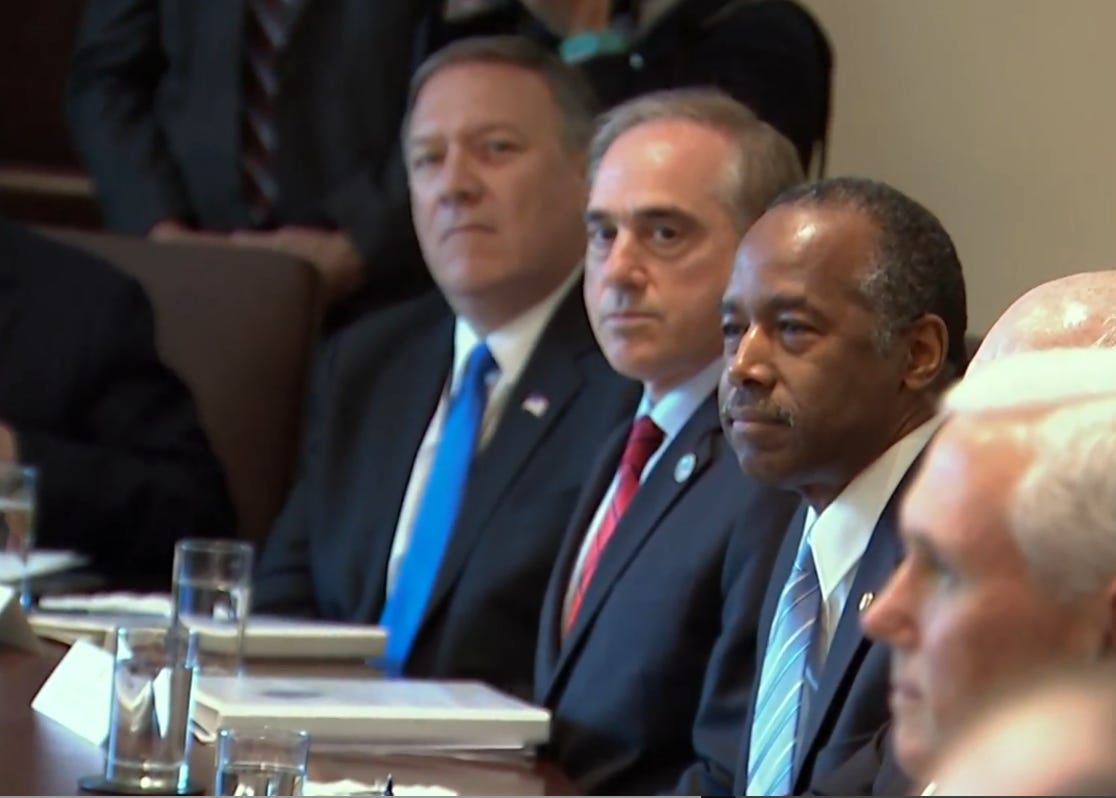

Perhaps the all-time best illustration of sycophantism in a social setting was the first cabinet meeting Trump held to introduce his team in June 2017. Trump had assembled all these folks and they clearly knew this would not be an introductory cabinet meeting like any in our history. It wasn’t a preview of how our new team of professionals were going to do their super-important jobs. It was time to build up the boss. Slather on the praise.

Ugly Experience, Instructive Lesson

The news cameras went around the room. The New York Times captured it for all time:

“The greatest privilege of my life is to serve as vice president to the president who’s keeping his word to the American people,” Mike Pence said, starting things off…

Sonny Perdue, the agriculture secretary, had just returned from Mississippi and had a message to deliver. “They love you there,” he offered, grinning across the antique table at Mr. Trump.

Reince Priebus, the chief of staff whose job insecurity had been the subject of endless speculation, outdid them all, telling the president — and the assembled news cameras — “We thank you for the opportunity and the blessing to serve your agenda.”

In conclusion. If your AI platform ever starts sounding like this? Maybe it’s time to pull back and get some perspective.

The Times went on, and in retrospect, gave us a good way to think about an appropriate tone when getting important advice from AI platforms, or people for that matter:

Jim Mattis, the secretary of defense — whose reputation for independence had been a comfort to Mr. Trump’s critics — refrained from personally praising the president, instead aiming his comments at American troops fighting and dying for their country.

“Mr. President, it’s an honor to represent the men and women of the Department of Defense, and we are grateful for the sacrifices our people are making in order to strengthen our military so our diplomats always negotiate from a position of strength,” Mr. Mattis said as Mr. Trump sat, stern-faced.

But the meeting still struck White House officials of past administrations as odd.

“I ran 16 Cabinet meetings during Obama’s 1st term,” Chris Lu, former President Barack Obama’s cabinet secretary, wrote on Twitter. “Our Cabinet was never told to sing Obama’s praises. He wanted candid advice not adulation.”